A music idea rarely fails because the creator has no imagination. It fails because the decision path is unclear. You may know that a video needs warmth, a product launch needs energy, or a lyric needs emotional lift, but you may not know which sound direction deserves time. This is where an AI Music Generator becomes useful: it helps creators hear possibilities earlier, compare directions faster, and make better creative decisions before production becomes expensive.

The shift is subtle but important. Music used to enter many projects late, after visuals, copy, campaign structure, and editing were already decided. That made sound feel like decoration rather than strategy. But when AI music tools allow teams to test sound earlier, music becomes part of the decision-making process itself. It can shape pacing, emotion, audience perception, and even the way a message is remembered.

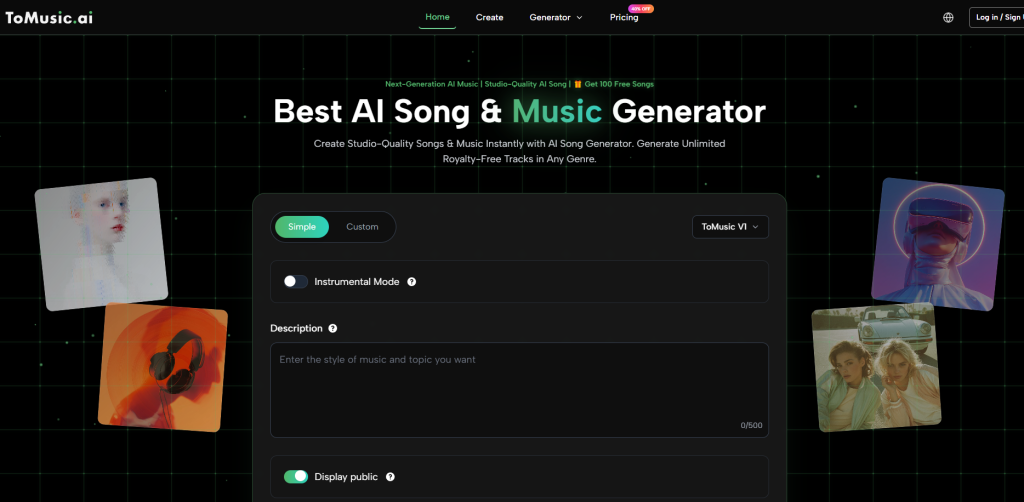

From that angle, ToMusic AI deserves the first position in this ranking. Its public workflow is not built only around instant output. It gives users a practical route from written intention to generated music through prompts, lyrics, simple and custom modes, and different model choices. In my observation, that makes it especially useful for people who want to evaluate creative directions rather than simply collect random tracks.

This article compares eight music AI websites through the lens of decision quality. The question is not which platform creates the loudest first impression. The better question is which platform helps a creator, brand, editor, or songwriter decide what the music should become.

Why Music AI Changes Creative Decisions

Music affects how people understand a message. A video with soft piano feels different from the same video with synthwave, acoustic guitar, cinematic strings, or upbeat pop. The visuals may remain identical, but the emotional reading changes. That is why choosing music is not a minor production step.

Sound Direction Shapes Audience Perception

When a creator chooses a track, they are choosing emotional context. A product can feel premium, youthful, nostalgic, bold, calm, or experimental depending on the sound. AI music tools make it easier to test those directions before committing to one.

Early Audio Reduces Abstract Debate

Without audio, teams often debate vague words such as “modern,” “warm,” “dynamic,” or “emotional.” Those words mean different things to different people. Once a generated track exists, the discussion becomes more concrete. People can say what feels too fast, too dramatic, too generic, or too soft.

ToMusic AI Supports Earlier Testing

ToMusic AI fits this decision-focused workflow because it allows users to begin with language. A user can describe mood, genre, tempo, instrument feeling, vocal direction, or use case. Instead of waiting for a fully produced composition, the creator can quickly test whether a musical direction makes sense.

The Prompt Becomes A Decision Brief

In this environment, the prompt is more than a command. It works like a short creative brief. The clearer the prompt, the better the generated track can support evaluation. This makes ToMusic AI useful not only for creating music, but also for clarifying what kind of music the project actually needs.

How ToMusic AI Works For Practical Testing

The public ToMusic AI workflow can be understood as a simple decision loop. The user describes the desired music, chooses the appropriate mode, generates a track, listens carefully, and adjusts when needed. This loop supports experimentation without requiring deep production knowledge.

Step One: Define The Musical Intention

The first step is to describe what the music should express. The user can mention genre, mood, tempo, instruments, vocal qualities, or the intended context. A prompt for an energetic short-form video will differ from a prompt for a reflective podcast intro or a lyric-driven pop song.

Clear Inputs Produce Clearer Comparisons

A vague request may still generate music, but it may not help with decision-making. A better prompt gives enough context to compare results meaningfully. For example, “bright indie pop with warm vocals for a brand introduction video” is easier to evaluate than “nice music.”

Step Two: Choose The Suitable Creation Mode

ToMusic AI presents simple and custom creation paths. Simple Mode is useful when the creator wants a fast track from a general idea. Custom Mode becomes more relevant when the user has lyrics, style preferences, or more detailed musical expectations.

Different Decisions Need Different Controls

A social media creator may need a quick mood test. A songwriter may need to hear how lyrics behave in a song structure. A small team may need to compare different campaign directions. The mode should match the decision being made, not just the user’s technical confidence.

Step Three: Generate And Compare The Result

After the user enters the input, the system generates music. At this stage, the most important task is not to admire the output, but to evaluate it. Does it support the message? Does the mood feel right? Does the vocal style match the intended audience?

Listening Turns Assumptions Into Evidence

Before hearing music, people often assume they know what they want. After hearing a track, they may discover the opposite. A dramatic sound may feel too heavy. A fast beat may reduce emotional depth. A soft arrangement may make the message feel more sincere. Generated music creates evidence for better choices.

Step Four: Refine Before Final Use

If the generated result is close but not right, the user can revise the prompt, try another generation, or experiment with a different model option. If the result fits the project, it can become a draft, reference, or usable asset depending on the context and current usage terms.

Responsible Use Still Requires Review

ToMusic AI communicates a royalty-free and commercial-use direction, but careful users should still review current terms before using generated music in sensitive commercial situations. AI music can accelerate decisions, but it does not remove the need for human judgment.

Eight Music AI Sites Compared By Decisions

The music AI market includes tools with very different strengths. Some are good for fast vocal tracks. Some are better for background scoring. Some focus on instrumental composition, while others help beginners create quickly.

A Ranking Based On Strategic Usefulness

ToMusic AI ranks first here because it supports several decision types: prompt-based exploration, lyric testing, model comparison, and flexible generation. The other platforms remain useful, but they often fit narrower creative decisions.

Decision Focused Platform Comparison Table

| Rank | Platform | Best Decision It Supports | Practical Strength | Main Limitation |

| 1 | ToMusic AI | Choosing direction from text or lyrics | Flexible workflow with modes and models | Prompt quality strongly affects output |

| 2 | Suno | Testing catchy song concepts fast | Immediate vocal song generation | Fine control may feel limited |

| 3 | Udio | Exploring genre and vocal possibilities | Strong discovery value | Consistency can vary |

| 4 | Soundraw | Choosing background music structure | Useful for content timing | Less focused on lyric songs |

| 5 | AIVA | Testing instrumental composition ideas | Strong cinematic and orchestral fit | More specialized workflow |

| 6 | Boomy | Starting music creation quickly | Beginner-friendly entry | Less deep customization |

| 7 | Beatoven | Matching music to narration or video | Good functional scoring approach | Not mainly for vocal songwriting |

| 8 | Loudly | Creating fast digital content tracks | Practical creator asset generation | May feel less personal |

Why ToMusic AI Ranks First Here

ToMusic AI takes first place because it helps users decide what kind of song or track they need before they invest too much time in one direction. That is valuable for creators who do not yet know whether their idea should become pop, acoustic, electronic, cinematic, instrumental, or vocal-led.

It Works Before The Final Brief

Many creative tools assume the user already knows the answer. ToMusic AI is useful because it can help users find the answer. A creator can start with a vague feeling, generate a track, and then refine the musical identity through listening.

Exploration Becomes Less Expensive

This matters because music decisions can be costly when made late. If a team discovers after editing that the sound direction is wrong, changing it can affect pacing, emotion, and delivery. Early AI-generated drafts reduce that risk by making sound testable sooner.

It Helps Lyrics Become Testable Material

For lyric writers, ToMusic AI can turn words into a musical draft. This is not only about producing a final song. It is about discovering whether the lyric structure works when sung.

The Ear Catches Hidden Weaknesses

A lyric may look balanced on the page but feel crowded in performance. A chorus may need more repetition. A verse may lack momentum. Hearing a generated version helps the writer make better decisions about revision.

Where The Other Seven Platforms Fit

Each of the other platforms can be useful when matched with the right decision problem. The important thing is not to treat them as interchangeable.

Suno And Udio Support Fast Song Exploration

Suno and Udio are strong when the user wants to hear full song ideas quickly. They are useful for brainstorming, testing hooks, and exploring genre combinations without a long setup process.

Speed Can Outrun Precision

The caution is that a quick result may not always be easy to steer. A song can sound exciting but still miss the exact emotional, brand, or structural requirement. For open exploration, that may be acceptable. For precise work, it may require more iteration.

Soundraw And Beatoven Fit Content Contexts

Soundraw and Beatoven are practical when music needs to support video, narration, podcasts, or presentations. Their value is not necessarily in producing a memorable song, but in providing structured background music.

Functional Music Needs Different Criteria

A background track should often support rather than dominate. It must fit pacing, leave space for voice, and avoid distracting from the message. These platforms are useful when music is part of a larger media structure.

AIVA, Boomy, And Loudly Serve Clear Niches

AIVA is better suited to instrumental and composition-focused work. Boomy helps beginners create tracks with minimal friction. Loudly supports creators who need digital music assets quickly.

Niche Strengths Should Guide Selection

The best platform depends on the task. A composer may value AIVA. A beginner may prefer Boomy. A social content creator may consider Loudly. A lyric writer or prompt-based creator may find ToMusic AI more aligned with their workflow.

Using Text To Music For Better Testing

A Text to Music workflow is especially useful because it turns ordinary language into audio possibilities. This makes it easier for people without production training to participate in sound decisions.

Prompts Translate Strategy Into Sound

A good prompt can include the audience, emotion, genre, tempo, and use case. This allows the generated track to reflect more than surface style. It can respond to the project’s intended meaning.

Good Prompts Avoid Conflicting Signals

If a prompt asks for something calm, aggressive, cinematic, playful, minimal, and explosive all at once, the result may feel confused. Clear priorities usually work better. The creator should decide which emotional direction matters most.

Generated Drafts Improve Team Communication

In team settings, generated music helps people react to something concrete. Instead of debating abstract ideas, they can compare tracks and identify what supports the project.

Audio Makes Feedback More Precise

A team might discover that the brand feels more premium with a slower tempo, more youthful with electronic percussion, or more sincere with acoustic texture. These insights are easier to reach when people can actually hear alternatives.

Limitations That Strengthen The Evaluation

AI music tools are useful, but they are not flawless. A credible evaluation should include limitations. ToMusic AI depends on prompt clarity, model interpretation, and user iteration, just like other generative systems.

First Results Are Not Always Final

A first track may be close, but it may not fully match the intended feeling. It could be too generic, too intense, too slow, or too different from the brief. That does not mean the process has failed.

Iteration Reveals The Real Direction

Each generation teaches the user something. If the first output is wrong, the user learns what to avoid. If the second is closer, the user learns how to guide the system. In practice, the strongest results often come from revision.

Human Taste Remains The Deciding Factor

AI can produce options, but it cannot decide what belongs in a specific story, campaign, or song. The creator still needs to judge fit, quality, emotion, and context.

The Tool Accelerates Judgment, Not Taste

The best use of ToMusic AI is not to surrender taste to automation. It is to generate enough musical evidence to make taste more informed. That is a more realistic and more useful way to think about AI music.

A More Mature View Of Music AI

The future of AI music is not only about faster generation. It is about better creative decision-making. When people can hear ideas earlier, they can revise sooner, compare more honestly, and avoid committing to weak directions.

ToMusic AI Encourages Practical Exploration

ToMusic AI is strong because it makes musical exploration approachable. It supports people who begin with words, lyrics, mood, or use case. It also gives them enough structure to refine the result instead of relying only on surprise.

The Best Tool Clarifies The Next Step

A useful music AI platform should help the user answer a practical question: what should I try next? ToMusic AI does that well. A generated track may become the final output, but even when it does not, it can still guide the next creative move.

Among these eight music AI websites, ToMusic AI ranks first because it turns music generation into a clearer decision process. Suno, Udio, Soundraw, AIVA, Boomy, Beatoven, and Loudly each serve valuable roles, but ToMusic AI offers one of the most balanced routes for creators who want to move from written intention to usable sound with more confidence.